Bootstrap the cluster with kubeadm:

Following on from part one, we will create our new Kubernetes cluster.

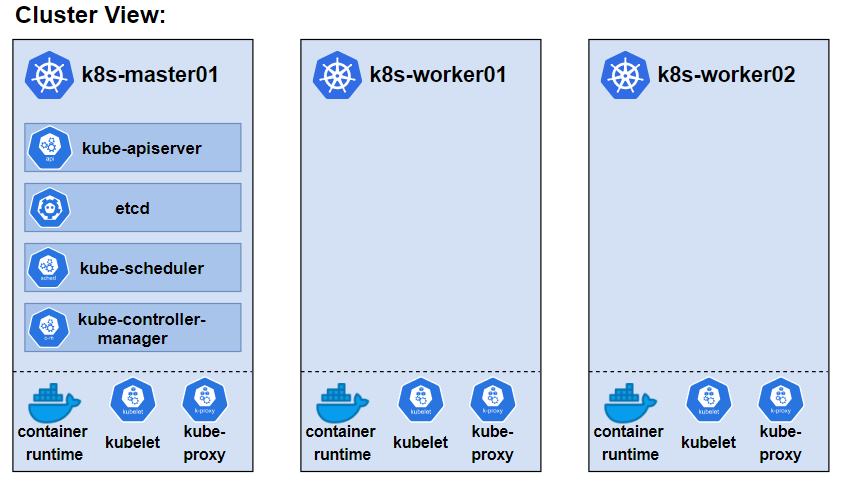

Our Kubernetes Cluster Topology:

A single master/control plane node and two worker nodes:

A View of the cluster

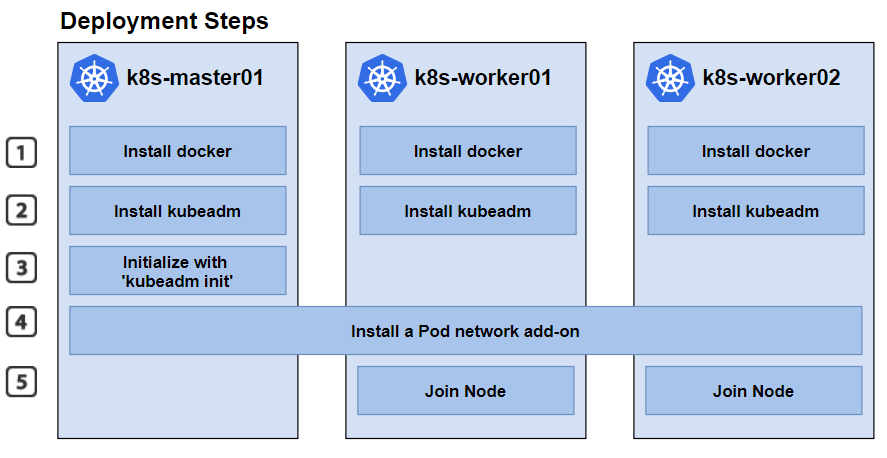

Deployment Steps

To deploy the cluster, we will first take care of the prerequisites, install docker and install kubeadm, following the Kubernetes documentation:

- https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

- https://kubernetes.io/docs/setup/production-environment/container-runtimes/

Installing kubeadm, kubelet and kubectl

We will install these packages on all the machines:

- kubeadm: the command to bootstrap the cluster.

- kubelet: the component that runs on all of the machines in your cluster and does things like starting pods and containers.

- kubectl: the command line util to talk to your cluster.

For specific versions:

sudo apt-get install -y kubelet=1.21.0-00 kubeadm=1.21.0-00 kubectl=1.21.0-00Initialize / Create the Cluster:

We can then create our cluster with ‘kubeadm init’

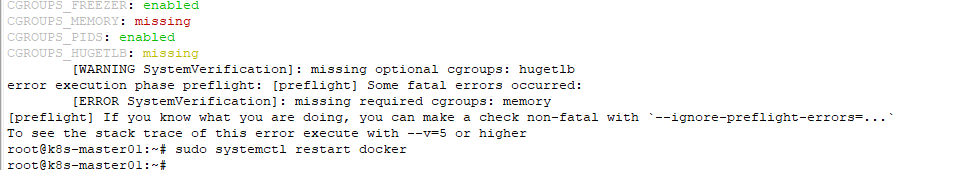

Bootstrap – Attempt 1

My first attempt failed due to a cgroups_memory issue:

root@k8s-master01:~# kubeadm init --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.0.185

[init] Using Kubernetes version: v1.22.2

[preflight] Running pre-flight checks

[preflight] The system verification failed. Printing the output from the verification:

root@k8s-master01:~# docker info | head

Client:

Context: default

Debug Mode: false

Plugins:

app: Docker App (Docker Inc., v0.9.1-beta3)

buildx: Build with BuildKit (Docker Inc., v0.5.1-docker)

Server:

Containers: 2

Running: 0

root@k8s-master01:~# docker info | grep -i cgroup

Cgroup Driver: systemd

Cgroup Version: 1

WARNING: No memory limit support

WARNING: No swap limit support

WARNING: No kernel memory TCP limit support

WARNING: No oom kill disable supportcgroups Fix

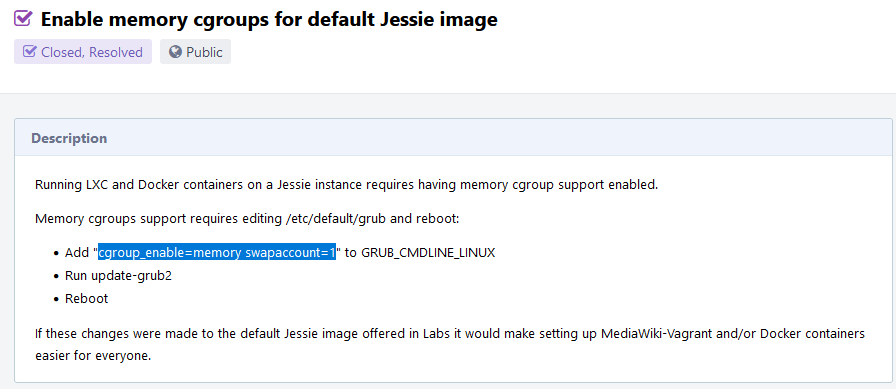

The fix is described here https://phabricator.wikimedia.org/T122734.

As there is no grub with Ubuntu on Raspberry Pi (https://unix.stackexchange.com/questions/475973/cant-find-etc-default-grub) I simply had to edit /boot/firmware/cmdline.txt and reboot.

root@k8s-master01:~# cat /boot/firmware/cmdline.txt

net.ifnames=0 dwc_otg.lpm_enable=0 console=serial0,115200 console=tty1 root=LABEL=writable rootfstype=ext4 elevator=deadline rootwait fixrtc cgroup_enable=memory swapaccount=1After reboot:

istacey@k8s-master01:~$ uptime

20:54:27 up 1 min, 1 user, load average: 1.36, 0.52, 0.19

istacey@k8s-master01:~$ cat /proc/cmdline

coherent_pool=1M 8250.nr_uarts=1 snd_bcm2835.enable_compat_alsa=0 snd_bcm2835.enable_hdmi=1 bcm2708_fb.fbwidth=0 bcm2708_fb.fbheight=0 bcm2708_fb.fbswap=1 smsc95xx.macaddr=DC:A6:32:02:F0:6E vc_mem.mem_base=0x3ec00000 vc_mem.mem_size=0x40000000 net.ifnames=0 dwc_otg.lpm_enable=0 console=ttyS0,115200 console=tty1 root=LABEL=writable rootfstype=ext4 elevator=deadline rootwait fixrtc cgroup_enable=memory swapaccount=1 quiet splashBootstrap – Attempt 2

Second attempt is successful:

root@k8s-master01:~# kubeadm init --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.0.185

[init] Using Kubernetes version: v1.22.2

[preflight] Running pre-flight checks

[WARNING SystemVerification]: missing optional cgroups: hugetlb

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.0.185]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.0.185 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master01 localhost] and IPs [192.168.0.185 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 28.511339 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.22" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master01 as control-plane by adding the labels: [node-role.kubernetes.io/master(deprecated) node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master01 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: oq7hb9.vtmiw210ozvi2grh

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

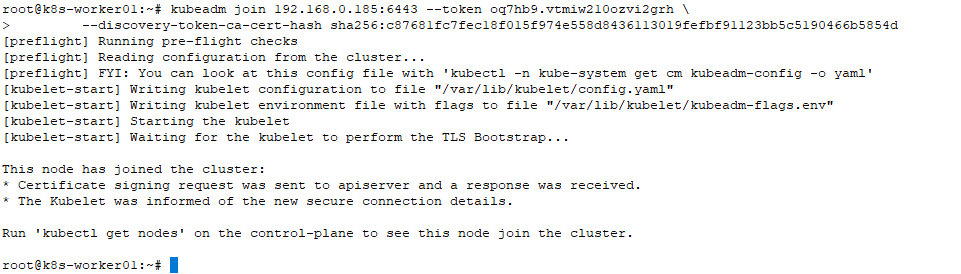

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.0.185:6443 --token oq7hb9.vtmiw210ozvi2grh \

--discovery-token-ca-cert-hash sha256:c87681fc7fec18f015f974e558d8436113019fefbf91123bb5c5190466b5854d

root@k8s-master01:~#Install the pod network add-on (CNI)

We will use Weave for this cluster

https://www.weave.works/docs/net/latest/kubernetes/

https://www.weave.works/docs/net/latest/kubernetes/kube-addon/

istacey@k8s-master01:~$ kubectl apply -f "https://cloud.weave.works/k8s/net?k8s-version=$(kubectl version | base64 | tr -d '\n')"

serviceaccount/weave-net created

clusterrole.rbac.authorization.k8s.io/weave-net created

clusterrolebinding.rbac.authorization.k8s.io/weave-net created

role.rbac.authorization.k8s.io/weave-net created

rolebinding.rbac.authorization.k8s.io/weave-net created

daemonset.apps/weave-net created

istacey@k8s-master01:~$Check Nodes are ready and pods running:

istacey@k8s-master01:~$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready control-plane,master 13m v1.22.2

istacey@k8s-master01:~$ kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-78fcd69978-cnql6 1/1 Running 0 14m

kube-system coredns-78fcd69978-k4bnk 1/1 Running 0 14m

kube-system etcd-k8s-master01 1/1 Running 0 14m

kube-system kube-apiserver-k8s-master01 1/1 Running 0 14m

kube-system kube-controller-manager-k8s-master01 1/1 Running 0 14m

kube-system kube-proxy-lx8bj 1/1 Running 0 14m

kube-system kube-scheduler-k8s-master01 1/1 Running 0 14m

kube-system weave-net-f7f7h 2/2 Running 1 (99s ago) 2m2sJoin the two Worker Nodes:

To get the join token:

kubeadm token create --help

kubeadm token create --print-join-command

Check once the two nodes are joined

istacey@k8s-master01:~$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready control-plane,master 18m v1.22.2

k8s-worker01 Ready <none> 2m4s v1.22.2

k8s-worker02 Ready <none> 51s v1.22.2

istacey@k8s-master01:~$ kubectl get ds -n kube-system

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

kube-proxy 3 3 3 3 3 kubernetes.io/os=linux 18m

weave-net 3 3 3 3 3 <none> 6m10sQuick Test:

istacey@k8s-master01:~$ kubectl run nginx --image=nginx

pod/nginx created

istacey@k8s-master01:~$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx 0/1 ContainerCreating 0 23s <none> k8s-worker01 <none> <none>

istacey@k8s-master01:~$ kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx 1/1 Running 0 27s 10.44.0.1 k8s-worker01 <none> <none>

istacey@k8s-master01:~$ kubectl delete po nginx

pod "nginx" deletedPart 1 here: